“Why Can’t I Be You?” by Luis Tsukayama Cisneros is licensed under CC by 2.0

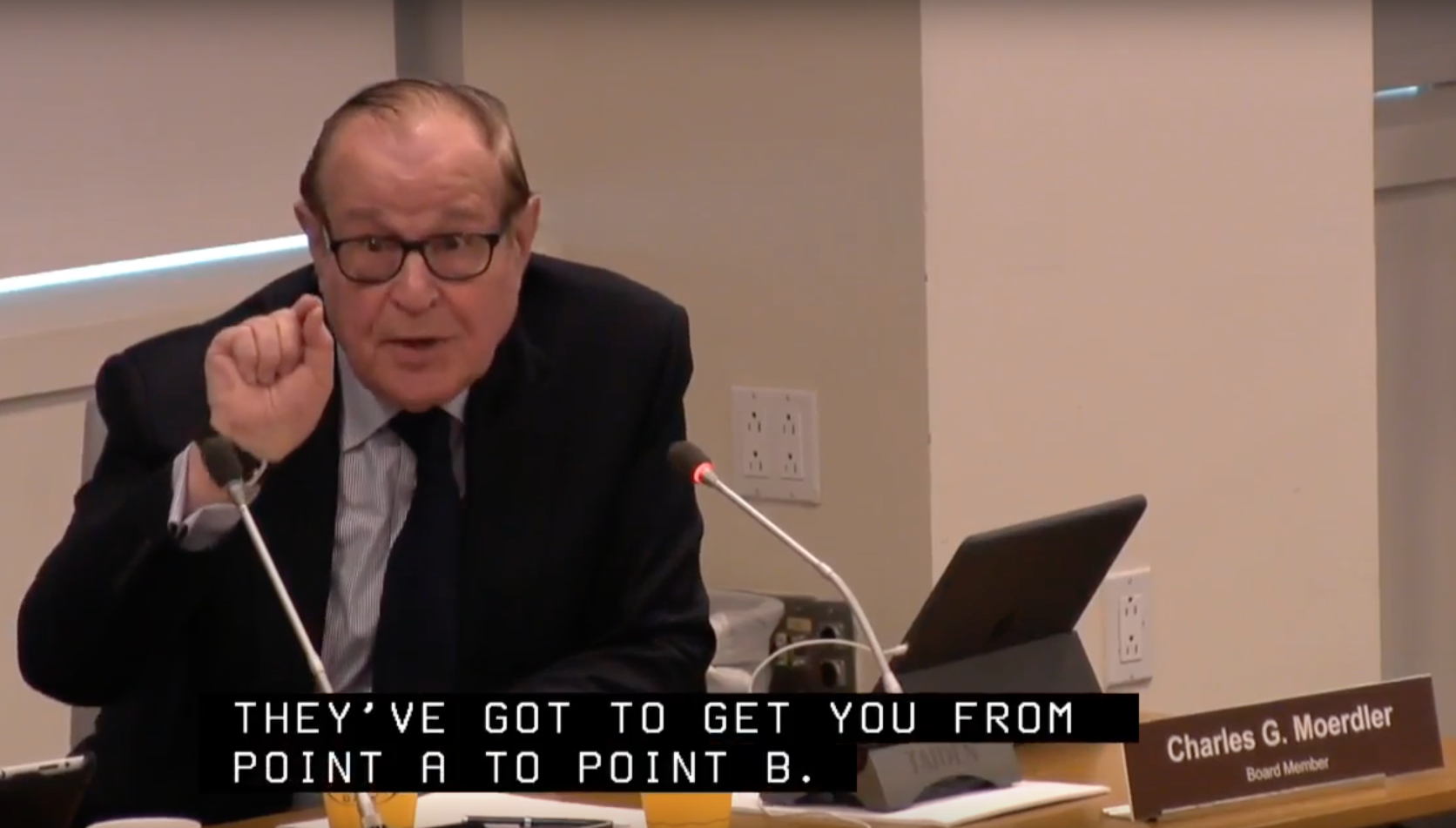

“I really would appreciate it if we could…put aside the jargon, and say how we get this done.” -MTA Board Member Charles Moerdler to MTA President Veronique Hakim, March 2016

During the MTA Board’s March meeting, a longstanding internal debate over how best to evaluate subway performance heated up. Under scrutiny was the ability of the agency’s core service performance indicators – “on-time performance” and “wait assessment” to account for two types of delay: extra time spent waiting in subway stations as well as on trains themselves.

Charles Moerdler addresses his fellow board members at the March 2016 meeting.

Board members Jeffrey Kay and Charles Moerdler made impassioned pleas to “once and for all make a decision” about which indicator to rely on. Kay also acknowledged a system-wide problem with longer delays that neither indicator measures meaningfully.

It’s encouraging that at least several board members seem eager to direct energy towards improving subway service rather than evaluating ways to evaluate service. However, why debate a choice between two flawed performance indicators rather than determine how the MTA can best measure and report to riders on performance?

In other words, the MTA board is having the wrong debate. The agency could quickly adopt a performance indicator that would better reflect reality from the rider point of view and help the MTA more effectively manage its service — excess journey time. Lauded by transit experts around the world as best practice, excess journey time legibly measures the types of delay mentioned above, and can be calculated using information the MTA already collects and analyzes.

Excess journey time powerfully illuminates where system performance is lacking, and creates an incentive for agency staff to focus resources to solve the problems that matter the most — the longest delays that affect the greatest number of people.

How does it work?

Excess journey time compares passengers’ average actual journey time (including time spent waiting at stops and delays once aboard vehicles) with the amount of time the schedule says their journeys should take.

Excess journey time is easier to understand (for management and the public alike) and more useful than “wait assessment,” as it gives more weight to long delays than short ones. It can also be calculated to give more weight to delays affecting a greater number of passengers. Consider it the people’s performance indicator. If excess journey time for the 6 Train is two minutes, then that means the average 6 Train rider loses two minutes of time on each ride due to delays. And a rush-hour melt-down affecting thousands would reflect more strongly than construction-related delay at 3 a.m.

In contrast, “wait assessment” has major deficiencies. Its basic measure is the percentage of train arrivals that cause passengers to wait at least 25 percent longer than expected. But it’s not obvious what it means to have a “good” wait assessment score. The A Line has a 12-month weekday wait assessment of 71.9%, but what does that mean for riders? Wait assessment is indifferent to how late a train is or how many riders are affected by its lateness. Excess journey time accounts for these omissions. Nonetheless, MTA spokespeople stoutly defend this imprecise metric.

To get riders where they need to go within reliable time-frames, the MTA must break new ground in measuring performance as well as commit to making the information public.

The MTA should report excess journey time alongside its existing on-time performance indicator. On-time performance measures the agency’s ability to deliver the service it has planned, which is essential for a system as complex as New York’s.

A transit system with perfect on-time performance would have all-but-eliminated both station and in-vehicle delays—it is no coincidence that the most reliable rail transit systems in the world also have exceptional (upwards of 90 percent) on-time performance (contrast this with NYC Subway on-time performance of 68.3%—lower than many bus systems). In addition to being intuitively understandable, this indicator aligns closely with agency operational goals of providing service according to the published timetables promised to the public.

New York City Transit has the data it needs to implement this change in performance management and reestablish itself as an industry leader. However, today it lags behind peers like the Massachusetts Bay Transportation Authority, whose Back on Track website is automatically updated daily with new performance statistics, making it easy for riders to explore data trends.

Instead of endlessly debating service reliability metrics, the MTA should use the adoption of excess journey time as a jumping off point for a larger conversation about regular public reporting of performance indicators that comprehensively monitor NYC transit service.

On the Brink: Will WMATA’s Progress Be Erased by 2024?

On the Brink: Will WMATA’s Progress Be Erased by 2024?

The experience of being a WMATA rider has substantially improved over the last 18 months, thanks to changes the agency has made like adding off-peak service and simplifying fares. Things are about to get even better with the launch of all-door boarding later this fall, overnight bus service on some lines starting in December, and an ambitious plan to redesign the Metrobus network. But all of this could go away by July 1, 2024.

Read More Built to Win: Riders Alliance Campaign Secures Funding for More Frequent Subway Service

Built to Win: Riders Alliance Campaign Secures Funding for More Frequent Subway Service

Thanks to Riders' Alliance successful #6MinuteService campaign, New York City subway riders will enjoy more frequent service on nights and weekends, starting this summer. In this post, we chronicle the group's winning strategies and tactics.

Read More